AI Development

ResQ: A Disaster Response Platform

Timeline

12/01/2025 - Current

Tools

Cursor

Google AI Studio

Figma

Team

1 Founding Designer and 1 Back-end Engineer

Hi, I'm Tiffany Nguyen, and together with my partner Minh Ta, we built ResQ, a disaster response coordination platform that connects stranded victims with available rescuers through intelligent matching algorithms. This idea was born from a tragedy that hit close to home, started as a Google AI Studio hackathon project, and continues to grow every day. In this post, I'll share our journey, how AI helped us materialize an idea in a crazy short amount of time, and how you can apply the same workflow to bring your ideas to life.

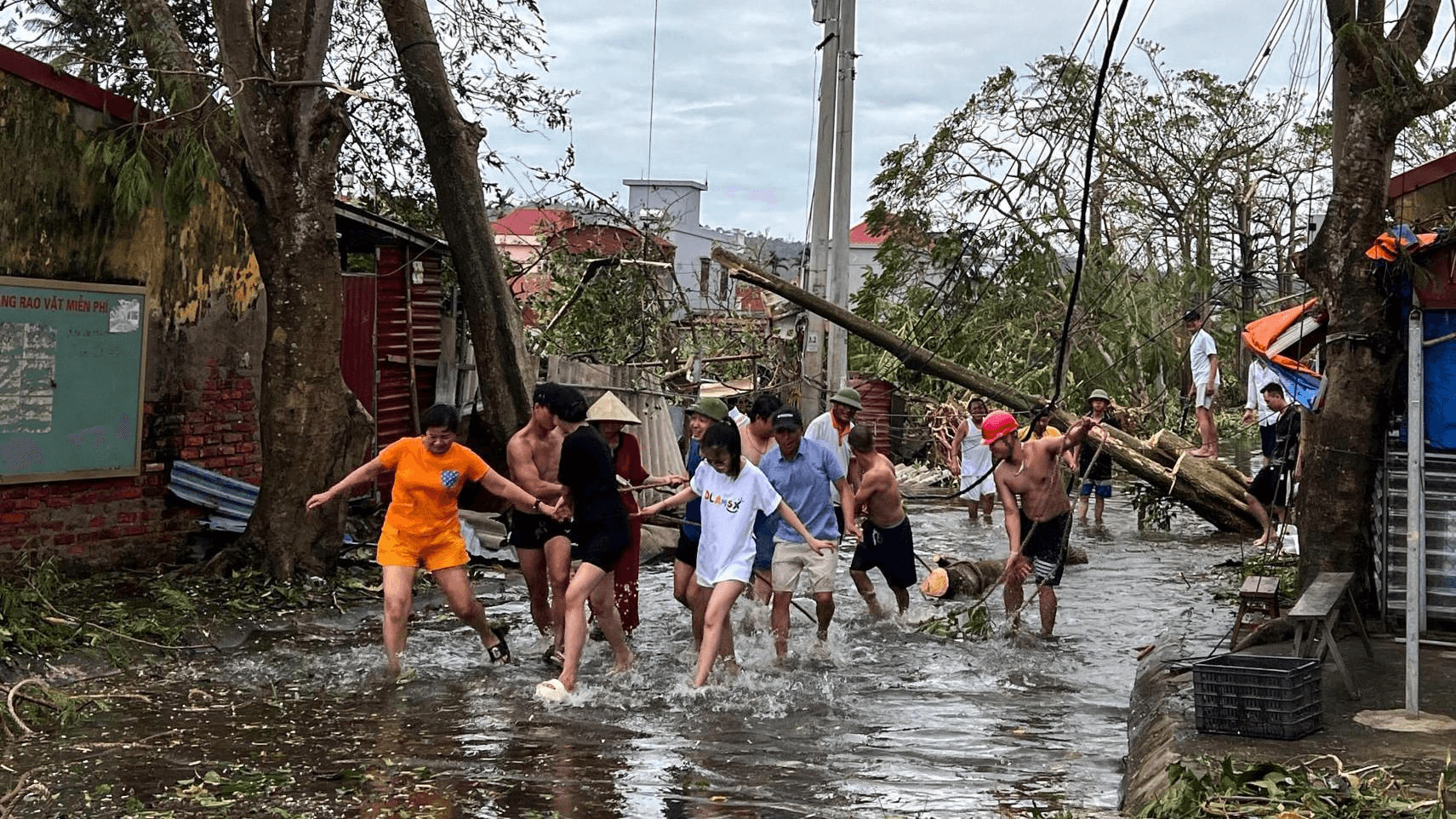

Background: Typhon Yagi

In September 2024, Typhoon Yagi struck Vietnam. This was the most powerful storm we've seen in a long time (my dad has never experienced a storm of this scale in his entire life!). According to UN OCHA reports, 321 people died, 1,978 were injured, and 3.6 million people were affected across 26 provinces. What's most heartbreaking to me is that many lives were lost waiting for help that arrived too late.

When I started researching, the numbers were staggering. The UN Multi-Sector Assessment estimated total damage at 81 trillion Vietnamese Dong (approximately $3.3 billion USD)[^2]. But beyond the financial impact, coordination itself was the bottleneck. The primary source of getting help in Vietnam during disasters relies on community outreach through Facebook, families posting pleas for rescue, hoping their posts go viral enough for someone with resources to see. If they're lucky, help eventually arrives. During an event of Typhoon Yagi's scale, this ad-hoc method proved unsustainable. Cries for help surged to the point where it became impossible to filter, prioritize, and coordinate rescue efforts effectively.

The Idea

During Typhoon Yagi, I watched something beautiful and heartbreaking unfold on Facebook. Thousands of Vietnamese people with boats, vans, medical supplies, were organizing rescue efforts through Facebook groups. Posts with GPS coordinates, photos of stranded families, desperate pleas for help. When a post went viral, help would arrive. But with thousands of posts flooding in simultaneously, many got buried. Many went unseen. Some families waited days.

One effort that stood out was ThongTinCuuHo (https://thongtincuuho.org/) (translated: "Rescue Information"). The site crawls and aggregates Facebook posts into a map so rescuers could see who needed help and where. It was incredible to witness the power of community organizing in real-time. From hundreds of posts scattered around Facebook, rescuers now have a centralized view of the landscape.

Regardless, there is a gap that kept lingering in my mind. Countless posts now visualized on a map, how do we ensure no dots are left uncleared? What if we could coordinate disaster rescue teams in real-time? What if we could cut response time from days down to minutes?

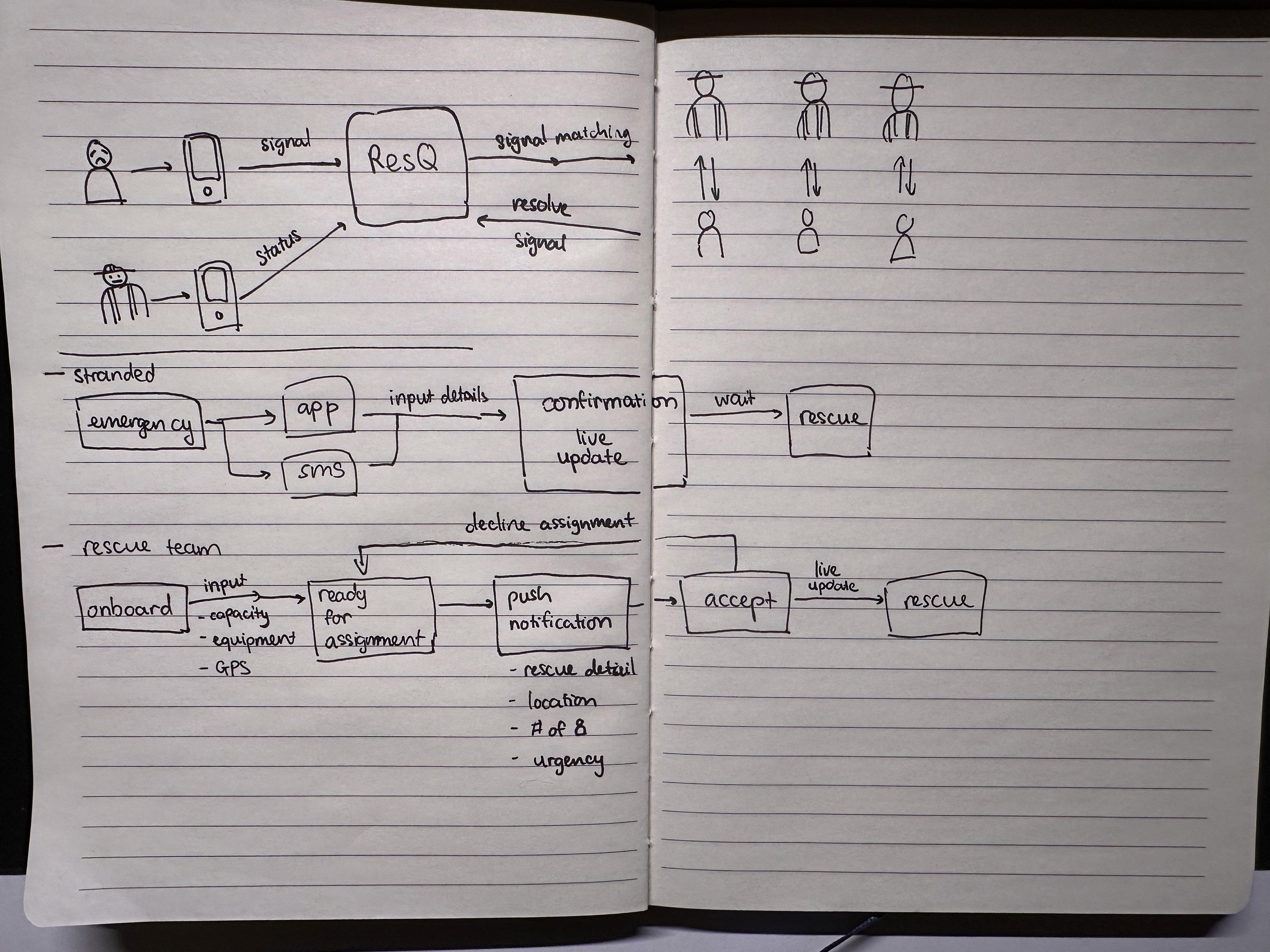

That's when ResQ was born: a web platform where anyone stranded during a disaster can send an SOS, get matched with the nearest available rescuer with the right equipment in seconds, and receive help within 8-15 minutes. With a concrete idea in mind, I picked up my scratchpad.

Design Phase: Crazy 8s to Hi-Fi Prototypes

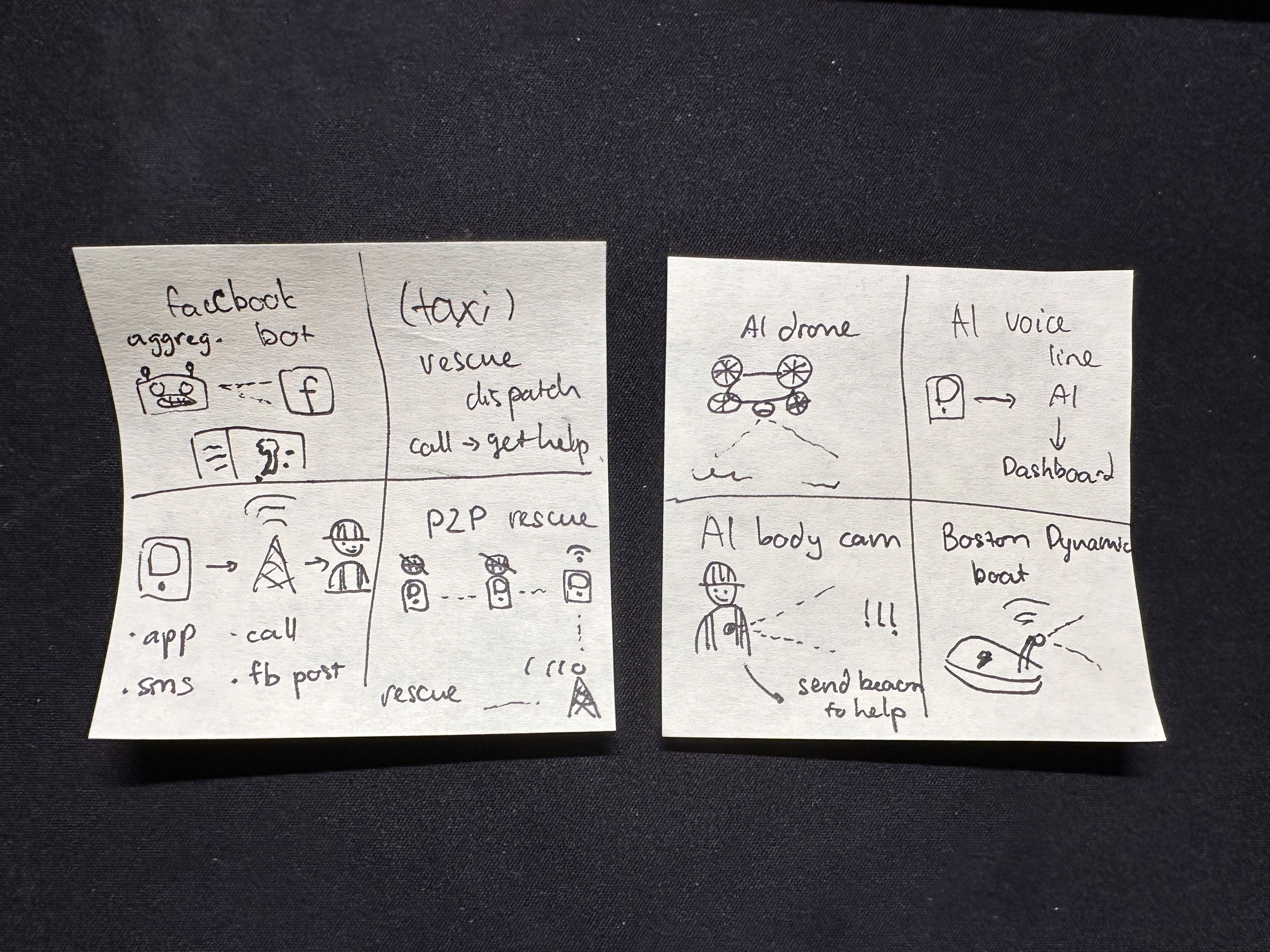

Like any good project, I started with rapid ideation. I ran a Crazy 8s exercise. If you're not familiar with the approach, Crazy 8s is an effective rapid prototyping method where I'd fold a paper into 8 sections and sketch 8 different interface concepts in 8 minutes (1 minute each). This forced me to explore UI variations quickly without overthinking. From these rough sketches, the ResQ concept emerged as the most viable solution.

Next, I mapped out the complete user flows: a stranded person sending an SOS, a rescuer receiving and accepting assignments, and a command center dashboard monitoring everything in real-time.

In my mind, it's critical to nail down the UX from the get-go. Each screen had to be designed for stress conditions. When someone is stranded in floodwater, they don't have time for complex forms. One tap, location sent, help on the way. Similarly for rescuers, there's no downtime during rescue ops. Every second matters. Right after picking someone up, the team needs to see their next destination ASAP.

Technical Design

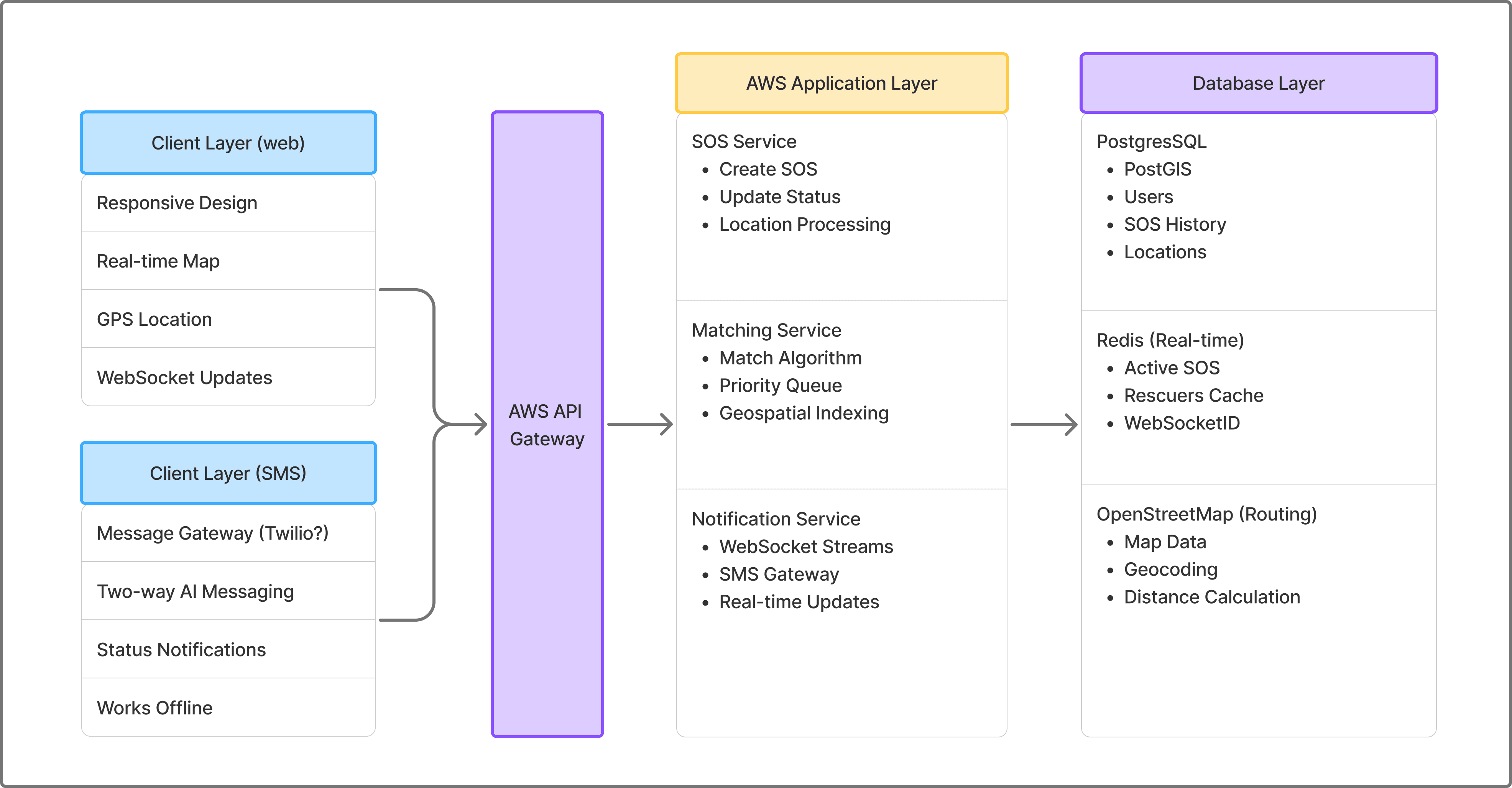

We designed a system that would work in a scenario like Typhoon Yagi. To be transparent: the full technical architecture you'll see below was developed after the hackathon, though I'm including it here to give you the complete picture. During the hackathon itself, we focused on defining the API contract first, and there's a strategic reason why, which I'll explain in the next section.

More on the tech stack:

Frontend: React, React-Query, Zustand, Socket.io

Backend: Node.js (Express), Python (FastAPI)

Database: PostgreSQL + PostGIS (geospatial queries), Redis (in-memory cache)

Infrastructure: AWS (API Gateway, Lambda, RDS, ElastiCache), Docker

External Services: Twilio SMS, OpenStreetMap

Real-time: Socket.io (WebSocket with HTTP long-polling fallback)

To satisfy the high availability requirement, we use two communication channels to ensure reliability: Web and SMS.

1. WebSocket (Socket.io) for real-time updates when internet is available:

Rescuer location updates stream to victims every 2-3 seconds

Instant status changes (SOS accepted, rescue in progress, completed)

Command center dashboard receives live updates of all active rescues

Low latency (~100-200ms) with persistent connections

2. SMS via Twilio as failover when network infrastructure fails:

Two-way messaging for status updates

Works on 2G networks and even with damaged cell towers

Critical for disaster scenarios where internet is unreliable

For this technical design, we looked extensively at how live matching platforms perform their algorithms. This design is heavily influenced by how Uber uses gRPC streams for driver connections with SMS fallbacks, and how AWS IoT Core handles device communication with multiple protocols[^4].

Co-Developing with AI

From this project, Minh and I found many areas where we had to level up. We're both comfortable with design and development, though developing a production-ready application of this scale with multiple personas in one week (hackathon timeframe) is an impossible feat with traditional development. While we specialize in front-end and design, we leveraged AI to accelerate backend development, adapting proven patterns from Uber and Grab's systems to our disaster response use case. So we focused on our strengths while letting AI bridge the gaps. As we got the design nailed down, AI helped us tremendously with the heavy lifting while we supervised the output quality. The output was anything but sloppy.

Front-End: Google AI Studio

AI Studio became our coding partner for the core front-end functionality. The workflow was straightforward: we'd describe what we needed based on our whiteboard sessions, then organize the generated code into digestible chunks and iterate. We learned to prompt one workflow at a time, letting the AI focus on a single feature before moving to the next. I fortunately learned this lesson pretty early in the journey, after bumping into context window and token limits.

After completing all 3 user flows, we prompted the AI to connect them together. This brings me back to why we defined the API contract early: I knew Google AI Studio excelled at creating demo-ready front-ends, but couldn't build a production-ready full stack application. To work within the hackathon's tool constraints, we asked it to create a mock backend using our API specification. This simulated the connection between rescuers and stranded persons, letting us demonstrate the complete user flow while staying within hackathon bounds. More importantly, this strategy gave us a solid foundation to move beyond AI Studio into production.

At this stage, we hit AI Studio's ceiling. While it excelled at creating beautiful front-end interfaces, the code organization left much to be desired. Despite my efforts to keep files tidy for better retrieval, the AI agent read too broadly and bloated its context, causing even simple queries to take 10-15 minutes. Its default behavior was cramming everything into massive monolithic files. I found myself prompting refactors regularly to maintain clean workflows and enforcing DRY (Don't Repeat Yourself) principles manually. The extra effort paid off, the resulting code was cleaner and more maintainable.

Our effort didn't stop there. Read on to see how we're bringing the idea to production.

Infrastructure: Cursor + Industry Best Practices

With the front-end demo complete and submitted to the hackathon, we turned our attention to production infrastructure. But first, let me rewind to explain how we approached the API and database design, which happened in parallel with the front-end work during the hackathon.

While I was designing the user interfaces, Minh dove into researching API specifications and database schemas. He used AI to study real-world systems that solve similar problems at scale to design a highly available system capable of handling massive traffic bursts during disasters. He also researched SMS gateway architectures for reliable communication in low-signal environments.

Uber: Real-time driver-rider matching handling millions of requests per second

Grab (Southeast Asia): "Pharos" routing system for Southeast Asia's complex road networks

DHS SARCOP: US disaster response coordination platform[^5]

NICS: Next-generation incident command system for emergency management[^6]

We found so many insights from these battle-tested systems! For example, Uber's H3 geospatial indexing divides Earth into hexagonal cells to handle 1 million matching requests per second[^7]. Why hexagons? They provide uniform neighbor distances (all 6 neighbors are equidistant), better circular approximations than squares, and hierarchical resolution levels from continental (Level 0) to parking-spot precision (Level 15)[^8].

Meanwhile, Grab's "Pharos" system takes a different approach. It uses road network graphs with Adaptive Radix Trees for even more accurate routing distance calculations in Southeast Asia's complex road layouts. Pharos achieves P99 latency of 10ms for driver updates and 50ms for proximity search[^9].

Both systems share key architectural principles:

In-memory storage (Redis) for speed

Geographic sharding to avoid cross-region queries

Event streaming (Kafka) to separate real-time matching from analytics

Dual communication channels (WebSocket + SMS fallback)

These patterns significantly shaped our understanding in developing for our disaster response context. To ensure timely rescues and real-time assurance, geospatial partitioning and in-memory caching on AWS infrastructure is more than neccessary.

From this point on, Cursor became our primary development partner for building production infrastructure. I'll save the deep technical implementation details for a future post, this blog is already getting long!

Thoughts After Working with AI End-to-End

Building ResQ with AI taught us invaluable lessons about where AI excels and struggles, and what it means to be a human architect in an AI-accelerated workflow. Through this journey, I realized we're at a turning point where what it means to be a "good developer" is fundamentally shifting.

Where AI Excelled

1. Research and Pattern Recognition

You've probably heard people calling LLMs "the ultimate intern." I agree, though I prefer thinking of AI as a goofy but highly effective collaborator 😅. The key is giving it clear instructions. When we needed to understand how Uber and Grab handle real-time matching, I prompted it to find engineering blogs, synthesize the architectural patterns, provide citations for everything, and cross-check its findings. And it did! Rapidly pulling insights across multiple systems with proper sources attached. For a better result, I limited the scope of my prompt to one company at a time, and cross-check separately after the insights are synthesized.

The breakthrough was realizing AI is incredibly capable when you tell it what standards to meet. The tool is only as good as the person using it. With the right prompts, AI delivers thorough, well-sourced research in minutes. It would take me weeks to research traditionally and compile insights manually.

2. Speed-Climbing the Learning Curve

Neither of us had deep experience with PostGIS, WebSocket race conditions, or SMS gateway architectures. AI became our patient mentor:

PostGIS: Explained spatial indexes, the Haversine formula for calculating distances on a sphere, and why GiST (Generalized Search Tree) indexes matter for geospatial performance

Real-time Systems: Walked us through optimistic locking patterns and queue systems to prevent multiple rescuers from accepting the same SOS

Infrastructure as Code: Guided us through AWS CDK patterns for secure, scalable geospatial infrastructure

These are just three examples, but they reveal AI's value: it doesn't just give you what to do. The LLM will happily explain the why behind technical decisions if you ask. It's like having access to years of accumulated engineering wisdom, distilled into digestible explanations. We absorbed knowledge that normally takes months to learn through trial and error, compressed into days of focused conversation with AI.

3. Rapid Prototyping and Iteration

During the hackathon, AI Studio helped us build three complete user flows (stranded person, rescuer, command center) in a week. The speed was genuinely impressive. We'd describe a workflow, and within minutes, we had working UI components with state management and basic routing. This rapid iteration let us test ideas and pivot quickly.

Where AI Slop

Will Smith eating spaghetti.

You can already tell throughout the blog that there are many areas AI hurts more than helps.

1. File Organization and Code Architecture

This was AI's biggest weakness. Left to its own devices, AI wanted to put everything in monolithic files. By the second user flow, we had a 3,000-line JavaScript file that was nearly impossible to navigate.

The lesson: You need to architect the codebase structure yourself. We learned to prompt AI Studio: "First, design a file structure for this feature" before writing any code. With Cursor, we got better at maintaining modular architecture, but it still required constant vigilance. AI thinks in solutions, not in maintainable systems.

2. Context Management

Both AI Studio and Cursor struggled with context management in different ways:

AI Studio: Read too much, bloated its context, causing even simple queries to take 10-15 minutes. We learned to be surgical: only show relevant files, break large tasks into smaller prompts.

Cursor: Better at managing context but still needed guidance on what to focus on. The most effective workflow was: "Here's the problem, here are the relevant files, here's what I've tried."

The lesson: Treat context like a scarce resource. Feed AI only what it needs. There are many blogs about this topic if you're interested about how to optimize context window and token usage.

3. The DRY Principle (Don't Repeat Yourself)

AI loves to duplicate code due to the monolithic tendency. When we built the rescuer interface, it recreated location tracking logic instead of reusing what we'd built for the stranded person flow. We found ourselves constantly prompting: "Extract this into a reusable utility" or "Use the existing LocationService instead of reimplementing." Once again, we need to be the in the driver seat and remind AI of the optimal path we designed.

The lesson: AI optimizes for solving the immediate problem, not long-term maintainability. Code review is your responsibility.

Wrapping Up, Horizon, and Future of the Project

Climate change means more extreme weather and traditional emergency systems (phone calls, Facebook posts, and hope) are exposing their cracks. We hope that disasters like Yagi never happens again. But in any case, we also hope that ResQ will be the reason someone survives.

To keep our eyes on the horizon why this project mattered, Minh and I keep these numbers in front of us and refer to them frequently.

Scale Projections for Vietnam:

Population at risk: 19 million people in northern provinces[^3]

Target response time: 8-15 minutes average (vs. hours/days with Facebook coordination)

System capacity: Designed for 10,000 concurrent SOS requests per hour

Critical mass needed: 500+ active rescuers per 100,000 population

I also have some closing thoughts on AI. Before this hackathon, I'd used AI to help with small coding tasks. This project was different, a system rapidly built by vibe. As astounishing as this whole thing seems to be, I learned that without human's direction, the product is bland and lack any flavors. Minh and I were the architects, guiding the AI every step of the way, especially at the beginning to set the direction. Without the effort and understading of what people went through during the typhoon, none of these would have materialized.

AI helped us code faster. It explained concepts we didn't understand and how big corporations solve seemingly impossible problems. However, AI couldn't design for users in extreme stress and feel the urgency of lost lives. The vision was ours. The persistence was ours. The final product was ours: built with AI, but architected by humans who cared deeply about solving this problem.

References

[^1]: UN OCHA. (2024). *Viet Nam: Typhoon Yagi - Situation Update No. 5*. Retrieved from https://www.unocha.org

[^2]: UNDP & Government of Viet Nam. (2024). *Viet Nam Multi-Sector Assessment (VMSA) Report for Typhoon Yagi Recovery*.

[^3]: UN Humanitarian Action. (2025). *Viet Nam Joint Response Plan for Typhoon Yagi and Floods*. Retrieved from https://humanitarianaction.info

[^4]: AWS. (2024). *Field Notes: Fleet Tracking Using Amazon Location Service with AWS IoT*. Retrieved from https://aws.amazon.com

[^5]: DHS Science & Technology. (2024). *SARCOP: One Team. One Mission. One Map*. Retrieved from https://dhs.gov

[^6]: WICS Corporation. (2016). *Next-Generation Incident Command System (NICS) Concept of Operations*.

[^7]: Uber Open Source. (2024). *H3: Hexagonal Hierarchical Geospatial Indexing System*. Retrieved from https://github.com/uber/h3

[^8]: Uber Engineering. (2018). *H3: Uber's Hexagonal Hierarchical Spatial Index*. Retrieved from https://h3geo.org

[^9]: Grab Engineering. (2020). *Pharos - Searching Nearby Drivers on Road Network at Scale*. Retrieved from https://engineering.grab.com

[^10]: AWS. (2024). *Process events asynchronously with Amazon API Gateway and AWS Lambda*. Retrieved from https://docs.aws.amazon.com/prescriptive-guidance

[^11]: DHS Science & Technology. (2024). *SARCOP: Search and Rescue Common Operating Platform*.

Still Evolving!

Thanks for reading! ☕

If you made it this far, thank you! This blog turned into quite the deep dive. Building ResQ has been one of the most meaningful projects I've worked on, and I'm grateful you took the time to follow along.

If anything in this post resonated with you, or if you're working on something similar, I'd love to connect! Find me on LinkedIn! I'm always excited to chat about disaster tech, AI-assisted development, or really anything you're passionate about building.

Now if you'll excuse me, I need to refill my coffee and get back to work. 😊